🍔🧠 Why Discord Didn't Rewrite Elixir (They Built This Instead)

PLUS: GPT-5.4 arrives 🤖, 60 year old tries Claude Code ⚡, AI investing stack 💰

Happy Monday! ☀️

Welcome to the 199 new hungry minds who have joined us since last Monday!

If you aren’t subscribed yet, join smart, curious, and hungry folks by subscribing here.

📚 Software Engineering Articles

Defeating deepfakes by stopping laptop farms and insider threats

Google quantum-proofs HTTPS with clever math tricks

How I use Claude code for productive development workflows

Balyasny built AI research engine for smarter investing decisions

System design interview framework that actually works

🗞️ Tech and AI Trends

Meta’s AI smart glasses raise serious privacy concerns

Something brewing with Qwen — OpenAI’s new challenger emerges

OpenAI launches GPT-5.4, pushing AI capabilities further

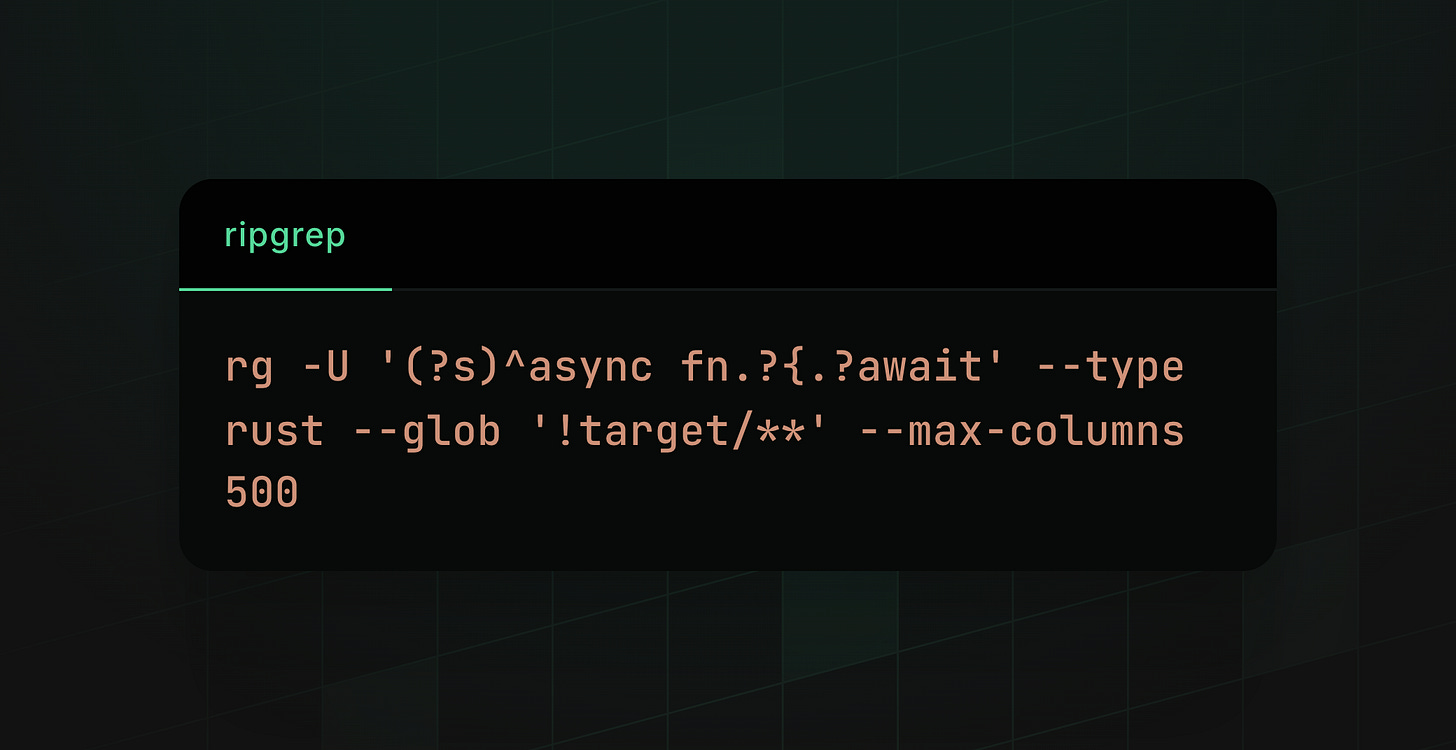

👨🏻💻 Coding Tip

Use ripgrep’s multiline mode with type filters for blazing-fast code search across massive codebases

How Discord Added Distributed Tracing to Elixir Without Breaking Everything 🧬

Discord needed visibility into why their Elixir services sometimes lag when handling millions of concurrent users. Their metrics and logs told them what was slow, but not why or how it cascaded through the system. Enter distributed tracing—the observability tool that shows you the complete journey of a user action from API to client.

The challenge: Elixir’s message-passing architecture doesn’t have built-in metadata layers like HTTP headers, so propagating trace context between processes required building a custom solution without downtime.

Implementation highlights:

Transport: A thin wrapper library: Created an

Envelopeprimitive that automatically wraps messages with trace context, making integration ergonomic and zero-copyGenServer drop-in replacements: Provided wrapped versions of

callandcastfunctions so developers didn’t need to think about trace propagationGradual rollout with runtime config: Mixed old and new communication patterns, allowing zero-downtime deployment across thousands of nodes

Dynamic sampling based on fanout size: Detected massive fanouts (messages to millions of sessions) and reduced sampling rates adaptively—from 100% for single recipients to 0.1% for 10k+ recipients

Surgical performance optimizations: Only propagate sampled trace contexts, skip root span creation post-fanout, and fast-path the sampling flag check to dodge expensive unpacking

Results and learnings:

Complete visibility: Track 16-minute session connection delays and quantify user impact during outages with end-to-end traces

Scalable observability: Dropped CPU overhead from tracing by 10+ percentage points through aggressive optimization and smart sampling

Production-ready infrastructure: Deployed across Discord’s entire Elixir stack without a single incident or service restart

Discord proved you can bolt sophisticated distributed tracing onto a message-passing architecture without sacrificing performance. Their Transport library is a masterclass in pragmatic observability—simple primitives, massive impact, zero friction.

The lesson here? Sometimes the best infrastructure doesn’t solve the problem elegantly. It solves it cheaply. And at Discord’s scale, cheap wins you the entire game.

Defeating the deepfake: stopping laptop farms and insider threats

Cloudflare One is partnering with Nametag to combat laptop farms and AI-enhanced identity fraud by requiring identity verification during employee onboarding and via continuous authentication.

Google quantum-proofs HTTPS by squeezing 15kB of data into 700-byte space

Merkle Tree Certificate support is already in Chrome. Soon, it will be everywhere.

How I use Claude Code

The research-plan-implement workflow I use to build software with Claude Code, and why I never let it write code until I’ve approved a written plan.

How Balyasny Asset Management built an AI research engine for investing

See how Balyasny built an AI research system with GPT-5.4, rigorous model evaluation, and agent workflows to transform investment analysis at scale.

How Databricks uses Databricks for LLM-Powered PII Detection and Governance

Learn how Databricks automates Personally Identifiable Information (PII) detection in logs and databases using LLMs on Databricks. By combining multi-tier labeling, smart prompt engineering and multi-model orchestration, we achieved precision rates up to 92% and recall up to 95%—techniques being integrated back into the product to benefit all customers.

Learning to Reason for Hallucination Span Detection

From Apple Machine Learning Research

I Struggled With System Design Interview Until I Learned This Framework

Written by Neo Kim

ARTICLE (satellites-go-brrr)

Scenario Modeling and Array Design for Non-Terrestrial Networks (NTNs)

GITHUB REPO (google-do-the-thing)

Google Workspace CLI

ARTICLE (boss-without-the-manual)

Leading Beyond the Framework 🧭 — with Richard Hughes-Jones

ARTICLE (oops-crashed-computers)

How a 12-Word Issue Title Owned 4,000 Developer Machines

ARTICLE (chrome-zoom-zoom)

Amid new competition, Chrome speeds up its release schedule

ARTICLE (keyboard-go-click)

Making keyboard navigation effortless

ARTICLE (robot-brain-time)

Build Your AI Agent the Right Way

ARTICLE (change-makes-scared)

Stability is a Trap

Want to reach 200,000+ engineers?

Let’s work together! Whether it’s your product, service, or event, we’d love to help you connect with this awesome community.

🔒 Meta’s AI Smart Glasses Spark Major Privacy Backlash as Workers Report “We See Everything” (6 min)

Brief: Meta’s AI-powered Ray-Ban glasses are collecting intimate user data including bathroom visits and naked bodies, which data annotators in Kenya process without users’ knowledge; the company’s opaque privacy policies and subcontractor practices leave Swedish users unaware that their personal videos are shared globally for AI training, raising serious GDPR compliance concerns.

⚠️ Alibaba’s Qwen AI Team In Turmoil After Key Researcher Exits (4 min)

Brief: Alibaba’s lead Qwen researcher Junyang Lin suddenly resigned, triggering a wave of departures from the AI team’s core members just as the company released Qwen 3.5, an exceptionally powerful family of open-weight models ranging from 2B to 397B parameters that achieve remarkable performance even at smaller sizes.

🤖 OpenAI Launches GPT-5.4: A Major Upgrade for Professional Work and Computer Use (5 min)

Brief: OpenAI released GPT-5.4, its most capable frontier model combining advanced reasoning, coding, and agentic workflows with native computer-use capabilities, achieving state-of-the-art performance on professional knowledge work, achieving 83% parity with industry professionals, and introducing tool search to reduce token usage by 47% while enabling agents to operate across larger tool ecosystems.

💻 Apple Launches MacBook Neo at Breakthrough $599 Price Point (2 min)

Brief: Apple unveils MacBook Neo, its most affordable laptop ever, featuring a durable aluminum design in four colors, a 13-inch Liquid Retina display, A18 Pro chip for 50% faster everyday performance, and up to 16 hours of battery life — all starting at just $599 with availability beginning March 11.

🤖 Study Questions Whether AGENTS.md Files Actually Help AI Coders (4 min)

Brief: ETH Zurich researchers found that LLM-generated context files hurt AI coding performance by 3% while increasing costs over 20%, though human-written files provide marginal 4% gains at the cost of higher inference expenses, challenging industry recommendations to include them.

This week’s tip:

Leverage ripgrep’s --type and --glob patterns with FPAT-like regex for multi-line matching without loading files into memory. ripgrep is orders of magnitude faster than grep/awk for code search, especially when combined with --multiline (-U) and --max-columns to handle generated or minified code safely.

Wen?

Searching across large TypeScript/Rust codebases for async/await patterns: ripgrep’s memory-mapped I/O and parallel search by default beats grep even with xargs -P.

Finding multi-line regex in JSON config or log files: Use --multiline with lookahead to avoid OOM on huge files.

Narrow search scope before slow operations: Combine with --files-with-matches -c to get counts first, then feed results to parallel processing tools like GNU parallel.

Take interest and even delight in doing the small things well.

Jim Rohn

That’s it for today! ☀️

Enjoyed this issue? Send it to your friends here to sign up, or share it on Twitter!

If you want to submit a section to the newsletter or tell us what you think about today’s issue, reply to this email or DM me on Twitter! 🐦

Thanks for spending part of your Monday morning with Hungry Minds.

See you in a week — Alex.

Icons by Icons8.

Great, highly valuable Substack, but I was somewhat taken aback by the subtle agist part of the title in this article. '60 year old tries Claude Code'. The strong implication (as I see it, at least) is that being 60 represents people who are technically ignorant and naive.

I'm 78 and, as a junior in high school (1965), I was privileged with access to a small IBM mainframe, complete with card punch reader/writer, a physical console with toggle-switch registers, an insanely noisy line printer and all the blinkenlights. I have been actively coding in the domain of "AI" and chatbots for many years, including, now, the application of LLM-based tech since reading the all you need is attention paper, including the use of Claude Code and having tried several other AI coding tools and platforms.

Not intended as a rant, but hope to increase author sensitivity concerning use of an age group as a demographic.